Getting started on Caviness

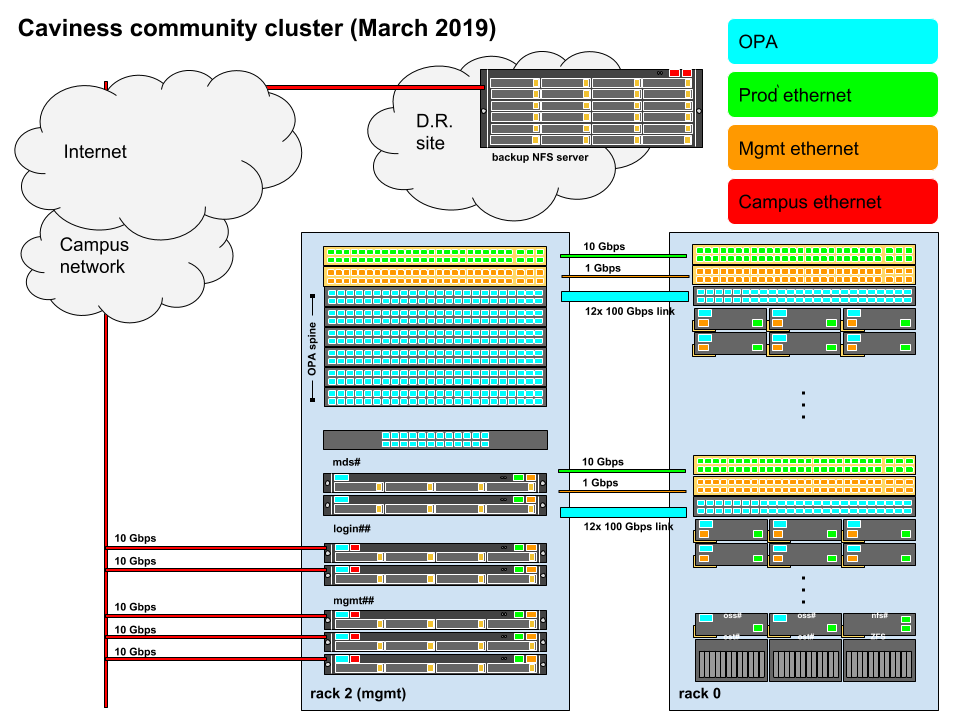

The Caviness cluster, UD's third Community Cluster, was deployed in July 2018 and is a distributed-memory Linux cluster. It is based on a rolling-upgradeable model for expansion and replacement of hardware over time. The current configuration Generation 1, 2, 2.1 and 3 consists of 367 compute nodes, 15104 traditional CPU cores, 49 GPUs, 121 TiB of RAM and 238 TB of NFS storage. An OmniPath network fabric supports high-speed communication and the Lustre filesystem (approx 476 TB of usable space). Gigabit and 10-Gigabit Ethernet networks provide access to additional filesystems and the campus network. The cluster was purchased with a proposed 5 year life for the first generation hardware, putting its refresh in the April 2023 to June 2023 time period.

For general information and specifications about the Caviness cluster, visit the IT Research Computing website. To cite the Caviness cluster for grants, proposals and publications, use these HPC templates.

Configuration

Overview

A HPC cluster always has one or more public-facing systems known as login nodes. The login nodes are supplemented by many compute nodes which are connected by a private network. One or more head nodes run programs that manage and facilitate the functioning of the cluster. (In some clusters, the head node functionality is present on the login nodes.) Each compute node typically has several multi-core processors that share memory. Finally, all the nodes share one or more filesystems over a high-speed network.

Login nodes

Login (head) nodes are the gateway into the cluster and are shared by all cluster users. Their computing environment is a full standard variant of Linux configured for scientific applications. This includes command documentation (man pages), scripting tools, compiler suites, debugging/profiling tools, and application software. In addition, the login nodes have several tools to help you move files between the HPC filesystems and your local machine, other clusters, and web-based services.

If your work requires highly interactive graphics and animations, these are best done on your local workstation rather than on the cluster. Use the cluster to generate files containing the graphics information, and download them from the HPC system to your local system for visualization.

When you use SSH to connect to caviness.hpc.udel.edu your computer will choose one of the login (head) nodes at random. The default command line prompt clearly indicates to which login node you have connected: for example, [traine@login01 ~]$ is shown for account traine when connected to login node login01.caviness.hpc.udel.edu.

Resource limits are of critical importance on cluster login nodes. Without effective limits in place, a single user could monopolize a login node and leave the cluster inaccessible to others. Please review Per-process CPU time limits on Caviness login nodes summarizing current resource limits and the need for and implementation of additional limits on the Caviness cluster login nodes.

Compute nodes

There are many compute nodes with different configurations. Each node consists of multi-core processors (CPUs), memory, and local disk space. Nodes can have different OS versions or OS configurations, but this document assumes all the compute nodes have the same OS and almost the same configuration. Some nodes may have more cores, more memory, GPUs, or more disk.

| Rack # | Number of Nodes | Node Names | Total Cores | Memory per Node | Total Memory | Total GPUs | Feature-Generation |

|---|---|---|---|---|---|---|---|

| 0 | 2 | r00n00, r00n56 | 144 | 128 GiB | 256 GiB | Gen1,E5-2695,E5-2695v4,128GB,HT | |

| 28 | r00n01 - r00n17, r00n45 - r00n55 | 1,008 | 128 GiB | 3.5 TiB | Gen1,E5-2695,E5-2695v4,128GB | ||

| 24 | r00n21 - r00n44 | 864 | 256 GiB | 6 TiB | Gen1,E5-2695,E5-2695v4,256GB | ||

| 3 | r00n18 - r00n20 | 108 | 512 GiB | 1.5 TiB | Gen1,E5-2695,E5-2695v4,512GB | ||

| 1 | r00g00 | 72 | 128 GiB | 128 GiB | p100:2 | Gen1,E5-2695,E5-2695v4,128GB,HT | |

| 1 | r00g01 | 36 | 128 GiB | 128 GiB | p100:2 | Gen1,E5-2695,E5-2695v4,128GB | |

| 2 | r00g02, r00g04 | 72 | 256 GiB | 512 GiB | p100:4 | Gen1,E5-2695,E5-2695v4,256GB | |

| 1 | r00g03 | 36 | 512 GiB | 512 GiB | p100:2 | Gen1,E5-2695,E5-2695v4,512GB | |

| Total | 62 | 2,340 | 12.5 TiB | 8 | Generation 1 | ||

| 1 | 2 | r01n00, r01n56 | 144 | 128 GiB | 256 GiB | Gen1,E5-2695,E5-2695v4,128GB,HT | |

| 28 | r01n01 - r01n17, r01n45 - r01n55 | 1,008 | 128 GiB | 3.5 TiB | Gen1,E5-2695,E5-2695v4,128GB | ||

| 24 | r01n21 - r01n44 | 864 | 256 GiB | 6 TiB | Gen1,E5-2695,E5-2695v4,256GB | ||

| 3 | r01n18 - r01n20 | 108 | 512 GiB | 1.5 TiB | Gen1,E5-2695,E5-2695v4,512GB | ||

| 2 | r01g00 - r01g01 | 72 | 128 GiB | 256 GiB | p100:4 | Gen1,E5-2695,E5-2695v4,128GB | |

| 3 | r01g02 - r01g04 | 108 | 256 GiB | 768 GiB | p100:6 | Gen1,E5-2695,E5-2695v4,256GB | |

| Total | 62 | 2,304 | 12.25 TiB | 10 | Generation 1 | ||

| 2 | 2 | r02s00 - r02s01 | 72 | 256 GiB | 512 GiB | nvme:raid0:1 | Gen1,E5-2695,E5-2695v4,256GB |

| Total | 2 | 72 | 0.5 TiB | 0 | Generation 1 | ||

| 3 | 25 | r03n00 - r03n23, r03n28 | 1,000 | 384 GiB | 9.375 TiB | Gen2,Gold-6230,6230,384GB | |

| 1 | r03n27 | 40 | 768 GiB | 768 GiB | Gen2,Gold-6230,6230,768GB | ||

| 3 | r03n24 - r03n26 | 120 | 1024 GiB | 3 TiB | Gen2,Gold-6230,6230,1024GB | ||

| 3 | r03g00 - r03g02 | 120 | 192 GiB | 576 GiB | t4:3 | Gen2,Gold-6230,6230,192GB | |

| 2 | r03g03 - r03g04 | 80 | 384 GiB | 768 GiB | t4:2 | Gen2,Gold-6230,6230,384GB | |

| 1 | r03g05 | 40 | 384 GiB | 384 GiB | v100:2 | Gen2,Gold-6230,6230,384GB | |

| 1 | r03g06 | 40 | 768 GiB | 768 GiB | v100:2 | Gen2,Gold-6230,6230,768GB | |

| 2 | r03g07 - r03g08 | 80 | 768 GiB | 1.5 TiB | t4:2 | Gen2,Gold-6230,6230,768GB | |

| 29 | r03n29 - r03n57 | 1,160 | 192 GiB | 5.4375 TiB | Gen2,Gold-6230,6230,192GB | ||

| Total | 67 | 2,680 | 17.0625 TiB | 11 | Generation 2 | ||

| 4 | 51 | r04n00 - r04n23, r04n50 - r04n76 | 2,040 | 192 GiB | 9.5625 TiB | Gen2.1,Gold-5218R,5218R,192GB | |

| 7 | r04n24 - r04n29, r04n41 | 280 | 384 GiB | 2.625 TiB | Gen2.1,Gold-5218R,5218R,384GB | ||

| 6 | r04n40, r04n42, r04n43, r04n45, r04n46, r04n48 | 240 | 768 GiB | 4.5 TiB | Gen2.1,Gold-5218R,5218R,768GB | ||

| 13 | r04n30 - r04n39, r04n44, r04n47, r04n49 | 520 | 1024 GiB | 13 TiB | Gen2.1,Gold-5218R,5218R,1024GB | ||

| 2 | r04s00 - r04s01 | 80 | 384 GiB | 768 GiB | nvme:raid0:1 | Gen2.1,Gold-5218R,5218R,384GB,Swap-32TB | |

| Total | 79 | 3,180 | 30.4375 TiB | 0 | Generation 2.1 | ||

| 5 | 36 | r05n15 - r05n20, r05n30 - r05n59 | 1,728 | 192 GiB | 6.75 TiB | Gen3,Intel,Gold-6240R,6240R,192GB | |

| 22 | r05n01, r05n03 - r05n14, r05n21 - nr05n29 | 1,056 | 384 GiB | 8.25 TiB | Gen3,Intel,Gold-6240R,6240R,384GB | ||

| 2 | r05n00, r05n02 | 96 | 768 GiB | 1.5 TiB | Gen3,Intel,Gold-6240R,6240R,768GB | ||

| Total | 60 | 2,880 | 16.5 TiB | Generation 3 | |||

| 6 | 20 | r06n00 - r06n19 | 960 | 1024 GiB | 20 TiB | Gen3,Intel,Gold-6240R,6240R,1024GB | |

| 8 | r06n20 - r06n27 | 384 | 768 GiB | 5.25 TiB | Gen3,Intel,Gold-6240R,6240R,768GB | ||

| 1 | r06g04 | 64 | 2048 GiB | 2 TiB | a40:4 | Gen3,AMD,EPYC-7502,7502,2048GB | |

| 1 | r06g06 | 48 | 256 GiB | 256 GiB | a100:2 | Gen3,AMD,EPYC-7352,7352,256GB | |

| 1 | r06g05 | 48 | 512 GiB | 512 GiB | a100:2 | Gen3,AMD,EPYC-7352,7352,512GB | |

| 2 | r06g02 - r06g03 | 96 | 1024 GiB | 2 TiB | a100:4 | Gen3,AMD,EPYC-7352,7352,1024GB | |

| 2 | r06g00 - r06g01 | 96 | 2048 GiB | 4 TiB | a100:8 | Gen3,AMD,EPYC-7352,7352,2048GB | |

| Total | 35 | 1,648 | 32 TiB | 20 | Generation 3 | ||

| Grand Total | 367 | 15,104 | 121 TiB | 49 | Generation 1, 2, 2.1, 3 |

The standard Linux on the compute nodes is configured to support just the running of your jobs, particularly parallel jobs. For example, there are no man pages on the compute nodes. Large components of the OS, such as development tools, are only added to that environment when needed.

All the multi-core CPUs and GPUs share the same memory in what may be a complicated manner. To add more processing capability while keeping hardware expense and power requirement down, most architectures use Non-Uniform Memory Access (NUMA). Also the processors may be sharing hardware, such as the FPUs (Floating point units).

Commercial applications, and normally your programs, will use a layer of abstraction called a programming model. Consult the cluster specific documentation for advanced techniques to take advantage of the low level architecture.

Storage

Permanent filesystems

At UD, permanent cluster filesystems are those that are backed up or replicated at an off-site disaster recovery facility. This always includes the home filesystem, which contains each user's home directory and has a modest per-user quota. A cluster may also have a larger permanent filesystem used for research group projects. The system is designed to let you recover older versions of files through a self-service process.

High-performance filesystems

One important component of HPC designs is to provide fast access to large files and to many small files. These days, high-performance filesystems have capacities ranging from hundreds of terabytes to petabytes. They are designed to use parallel I/O techniques to reduce file-access time. The Lustre filesystems in use at UD are composed of many physical disks using RAID technologies to give resilience, data integrity, and parallelism at multiple levels. They use high-bandwidth interconnects such as InfiniBand and 10-Gigabit Ethernet.

Large capacity high-performance filesystems are typically designed as volatile scratch storage systems. The amount of data present makes backing-up the filesystem practically and financially infeasible. However, the underlying design provides increased user-confidence by providing a high level of built-in redundancy against hardware failure.

Local filesystems

Each node has an internal, locally connected disk. Its capacity is measured in terabytes. Part of the local disk is used for system tasks such memory management, which might include cache memory and virtual memory. This remainder of the disk is ideal for applications that need a moderate amount of scratch storage for the duration of a job's run. That portion is referred to as the node scratch filesystem.

Each node scratch filesystem disk is only accessible by the node in which it is physically installed. The job scheduling system creates a temporary directory associated with each running job on this filesystem. When your job terminates, the job scheduler automatically erases that directory and its contents.

More information about Caviness storage and quotas can be found on the sidebar under Storage.

Software

A list of installed software that IT builds and maintains for Caviness users can be found by logging into Caviness and using the VALET command vpkg_list.

Documentation for all software is organized in alphabetical order on the sidebar under Software.

Review the nVidia'a GPU-Accelerated Applications list for applications optimized to work with GPUs. These applications would be able to take advantage of nodes equipped with nVidia P100 "Pascal" GPU coprocessors.

Help

System or account problems, or can't find an answer on this wiki

If you are experiencing a system related problem, first check Caviness cluster monitoring (off-campus access requires UD VPN). To report a new problem, or you just can't find the help you need on this wiki, then submit a Research Computing High Performance Computing (HPC) Clusters Help Request and complete the form including Caviness in the short description, problem type High Performance Computing, and your problem details in the description.

Ask or tell the HPC community

hpc-ask is a Google group established to stimulate interactions within UD’s broader HPC community and is based on members helping members. This is a great venue to post a question about HPC, start a discussion, or share an upcoming event with the community. Anyone may request membership. Messages are sent as a daily summary to all group members. This list is archived, public, and searchable by anyone.

Publication and Grant Writing Resources

For general acknowledgment of UD IT-RCI staff and HPC resources, please use the following text: "This research was supported in part through the use of Information Technologies (IT) resources at the University of Delaware, specifically the high-performance computing resources."

HPC templates are available to use for a proposal or publication to acknowledge use of or describe UD’s Information Technologies HPC resources.