Grid Engine cgroup Integration

Several variants of Grid Engine claim to support integration with Linux cgroups to control resource allocations. One such variant was chosen for the University's Farber cluster partly for the sake of this feature. We were incredibly disappointed to eventually figure out for ourselves that the marketing hype was true in a very rudimentary sense (almost as though this advertised feature was really only at beta quality, at best). Some of the issues we elucidated were:

- The slot count multiplies many resource requests, like

m_mem_free. As implemented, the multiplication is applied twice and results in incorrect resource limits. E.g. requestingm_mem_free=2Gfor a 20-core threaded job, the memory.limit_in_bytes applied bysge_execdto the job's shepherd was 80 GB. - For multi-node parallel jobs, remote shepherd processes created upon

qrsh -inheritto a node never have cgroup limits applied to them.- Even if we created the appropriate cgroups on the remote nodes prior to

qrsh -inherit(e.g. in a prolog script), thesge_execdnever adds the shepherd or its child processes to that cgroup.

- Grid Engine core binding assumes that all core usage is controlled by the

qmaster; thesge_execdnative sensors do not provide feedback w.r.t. what cores are available/unavailable.- If a compute node were configured with some cores reserved for non-GE workload, the

qmasterwould still attempt to use them

- Allowing Grid Engine to use the cpuset cgroup to perform core-binding works on the master node for the job, but once again slave nodes have no core-binding applied to them even though the

qmasterselected cores for them. - Using cgroup freezer to cleanup after jobs always results in the shepherd's being killed before its child processes, which signals to

sge_execdthat the job ended in error1). - A bug exists by which a requested

m_mem_freewhich is larger than the requestedh_vmemvalue for the job is ignored and theh_vmemlimit gets used for both. This is contrary to documentation.2).

The magnitude of the flaws and inconsistencies – and the fact that on an HPC cluster multi-node jobs form a significant percentage of the workload – meant that we could not make use of the native cgroup integration in this Grid Engine product, even though it was a primary reason for choosing the product. A worthy system administrator cannot simply wait for the software vendor to make the product work properly. When backed into a corner like this one, the worthy system administrator finds a way to implement the desired operation himself.

Process Quarantine

Disabling the innate cgroup integration features means that processes are no longer created within the appropriate cgroup containers on the master node. This never happened on slave nodes anyway, so a mechanism to quarantine sge_shepherd processes is actually necessary no matter what.

The cgred daemon has the job of doing this on Linux. But cgred has a relatively static rule grammar that is inadequate (on top of that, we found when we built Farber that the native version of cgroup tools in CentOS 6.5 has significant bugs that prevented processes from being quarantined at all despite a correct rule set).

The solution to this problem came when we realized that process fork/exec can be observed on Linux via kernel notifications. A userland program can connect to the kernel via netlink and consume process fork/exec events. In particular we were interested in exec events. For each such event we examine the process's command (/proc/<pid>/comm) and react accordingly:

| command | response |

|---|---|

sge_shepherd | Create and configure /cgroup/<subsystem>/GE/<jobid>.<taskid> containers |

sge_execd | Create and configure /cgroup/<subsystem>/GE containers |

A detailed description of the responses follows.

sge_execd

When sge_execd starts, it's cpuset cgroup is configured to:

- set

/cgroup/cpuset/GE/cpuset.cpusto the same value as/cgroup/cpuset/cpuset.cpus - set

/cgroup/cpuset/GE/cpuset.cpu_exclusiveto1 - set

/cgroup/cpuset/GE/cpuset.memsto the same value as/cgroup/cpuset/cpuset.mems

The last item is of critical importance for both sge_execd and each sge_shepherd – a cpuset with no explicit setting for cpuset.mems cannot have any tasks assigned to it, period!

sge_shepherd

When an sge_shepherd starts, the following actions are taken:

- Create the per-job cgroup in the chosen subsystems,

/cgroup/<subsystem>/GE/<jobid>.<taskid>- Perform any special initialization actions based on the subsystem in question

- Add the

sge_shepherdPID to/cgroup/<subsystem>/GE/<jobid>.<taskid>/tasks

The only subsystem with special initialization is the cpuset:

- Set

/cgroup/cpuset/GE/<jobid>.<taskid>/cpuset.memsto the same value as/cgroup/cpuset/GE/cpuset.mems - Choose a single core for

/cgroup/cpuset/GE/<jobid>.<taskid>/cpuset.mems

Core selection is done by setting /cgroup/cpuset/GE/<jobid>.<taskid>/cpuset.cpu_exclusive and iterating over the core id range dictated by /cgroup/cpuset/GE/cpuset.cpus. If a core cannot be used, writing its id to /cgroup/cpuset/GE/<jobid>.<taskid>/cpuset.cpus will fail. The first write to succeed is the core selected initially for the job.

sge-digby

The quarantine behavior described above is implemented in a daemon (written in C) called sge-digby. The daemon registers with the kernel to receive process fork/exec events and enters a runloop waiting on delivery of events. On delivery of exec events the daemon checks /proc/<pid>/comm and reacts to sge_shepherd or sge_execd by performing the actions described above.

Runtime options are as follows:

usage:

sge-digby {options}

options:

--help/-h show this information

--tracefile/-t <path> actions performed will be logged to

the given <path>. If <path> is omitted

the trace is logged to stderr

--enable/-e <subsystem> enable checks against the given cgroup

subsystem

--disable/-d <subsystem> disable checks against the given cgroup

subsystem

--daemon/-D run as a daemon

--pidfile/-p <path> file in which our pid should be written

--sentinel-dir/-s <path> directory in which to stash sentinel files

(default: /dev/shm/digby)

<subsystem> should be one of:

blkio, cpu, cpuacct, cpuset, devices, freezer, memory, net_cls

Subsystems enabled by default are:

cpuset, freezer, memory

Job Resource Information

Grid Engine makes some job resource details available in the job's environment: total slot count and total host count. But other items of interest remain unexposed by default: memory limits, coprocessors allocated. For a job that will run using one nVidia GPU on a node containing more than one GPU, how does the job determine which GPU it's been granted? How does a job whose executable wants to know a maximum allocatable memory limit find that information?

By making each compute node in the cluster an execution as well as a submission host, the qstat command becomes available. The -xml -j <job-id> flags to qstat produce a more complete description of a job; in particular, granted resource requests are present for jobs that are running. Extracting that information from the large XML document generated can be difficult in BASH, though. Our solution was to write a helper program that uses the libxml2 library to parse the qstat -xml -j <job-id> output and then walks resource XML nodes by means of XPath expressions.

sge-rsrc

The sge-rsrc program streamlines the extraction of resource information for a job. It produces (on stdout) a sequence of BASH statements that can be evaluated to setup variables containing the resource information on a per-host basis. It was primarily written for the sake of the prolog and epilog scripts, but is also useful in Phi/GPU job scripts in an environment where nodes have multiple coprocessors (and the job needs to know which one(s) it's been given). Typically, the BASH commands create the following array variables upon evaluation:

| variable | value description |

|---|---|

SGE_RESOURCE_HOSTS | array of participating host names |

SGE_RESOURCE_NSLOTS | array of core counts on each participating host |

SGE_RESOURCE_MEM | array of cgroup memory limit for each participating host (from the m_mem_free complex) |

SGE_RESOURCE_VMEM | array of cgroup virtual memory limit for each participating host (from the h_vmem complex) |

SGE_RESOURCE_GPU | array of GPU assignments for each participating host (space delimited, from the nvidia_gpu complex) |

SGE_RESOURCE_PHI | array of Intel Phi assignments for each participating host (space delimited, from the intel_phi complex) |

SGE_RESOURCE_JOB_MAXRT | hard maximum wallclock (h_rt) for the job (in seconds) |

SGE_RESOURCE_JOB_IS_STANDBY | if non-zero, job will run on standby queues |

SGE_RESOURCE_JOB_VMEM | hard maximum vmem (h_vmem) for the job (in bytes, per-slot) |

SGE_PROLOG_* and in epilog mode SGE_EPILOG_*. Prolog mode adds echo commands meant to log useful resource information to a job's stdout file.

The ordering of SGE_RESOURCE_HOSTS dictates the order of values in the other arrays (e.g. the slot allotment for the host in ${SGE_RESOURCE_HOSTS[0]} is in ${SGE_RESOURCE_NSLOTS[0]}). For any host lacking an allotment for a particular resource the array in question will contain an empty string.

Adding the --only flag to sge-rsrc limits the variables to be scalar (not arrays) and contain only the resource allotments for the node on which the command was issued. Using the --host flag also produces scalar variables, but for a specific participating host (by name).

The sge-rsrc command has built-in help that is accessed using the --help flag.

usage:

sge-rsrc {options} [task-id]

options:

-h/--help show this information

-m/--mode=[mode] operate in the given mode:

prolog: SGE prolog script

epilog: SGE epilog script

userenv: user environment

-p/--prolog shorthand for --mode=prolog

-e/--epilog shorthand for --mode=epilog

-o/--only return information for the native

host only, not an array of hosts

-H/--host=[hostname] return information for the specified

host only, not an array of hosts

-j/--jobid=[job_id] request info for a specific job id

(without this option, qstat output

is expected on stdin)

Cgroup Refinement

Earlier in this document we saw that the sge-digby daemon catches new sge_shepherd processes and quarantines them in job-specific cgroup containers. The daemon assigns the shepherd (and its child processes) exclusive use of a single processor core. For parallel jobs or jobs that have memory limits placed on them, further modification of the memory and cpuset job-specific cgroup containers is required to meet those resource limits.

cgroup_bind.sh

The cgroup_bind.sh script takes four arguments – job-specific cgroup entity (e.g. <job-id>.<task-id>), core count, memory limit, virtual memory limit – and writes the appropriate cgroup configuration files for the job to enact those limits. Either memory limit can be 0 to indicate no limit. For example, to impose a 1 GB limit, unlimited virtual memory, and 4 cores on job 23.4:

$ cgroup_bind.sh 23.4 4 1073741824 0

If the first argument is the flag --unlimit then the rest of the resource arguments are ignored and the cgroup configuration files are modified to use the limits imposed on sge_execd itself.

$ cgroup_bind.sh --unlimit 23.4 0 0 0

The script is called from the Grid Engine job prolog script. For slave nodes the sge_shepherd for the job will not yet have been created, so the cgroup_bind.sh script by necessity creates the applicable job-specific cgroup entities (e.g. /cgroup/memory/GE/<job-id>.<task-id> and /cgroup/cpuset/GE/<job-id>.<task-id>).

Intel Phi Integration

Unlike an nVidia GPU, the Intel Phi is essentially a computer running Linux. As such, it has its own passwd and group files and an in-memory filesystem. Rather than propagating all user accounts to the Phi and keeping it booted constantly, we prefer to on-demand reset/boot the Phi as part of the startup of a job that's requested Phi resources. Likewise, when the job completes the Phi is reset to the "ready" state to await its next job.

boot_mic.sh

This script requires as arguments the job owner's uid and a list of the mic# coprocessors which should be booted on the host:

$ boot_mic.sh traine mic0 mic1

The script loops across each mic# and uses the micctrl command to coalesce the device to a booted state. Once booted:

- the group and passwd records for the job owner are added to the Phi

- the NFS software directory is mounted on the Phi (

/opt/shared) - the owner's NFS home directory is mounted on the Phi (

/home/<uidnumber>) - the NFS workgroup directories to which the owner has access are mounted on the Phi (

/home/work/<workgroup>)

If successful across all nodes, the exit value is zero. The Phi is visible on the cluster's Ethernet network and the user's cluster-wide home directory is mounted, so passwordless ssh access is possible directly to the Phi coprocessor.

p132-mic0 is the first MIC on the compute node named p132.

reset_mic.sh

This script requires as arguments a list of the mic# coprocessors which should be reset on the host:

$ reset_mic.sh mic0 mic1

The script loops across each mic# and uses the micctrl command to coalesce the device to a ready state.

Grid Engine Prolog

The prolog script calls sge-rsrc (in prolog mode) and uses the array(s) produced to complete the setup of the job's cgroup subsystems.

sge-digby which has the latter overwriting the configuration done by the former. In practice, this happened with qlogin jobs and had sge-digby resetting the cpuset.cpus to a single core when a threaded, multi-core configuration was desired. As a fix, the prolog script waits on a sentinel file that sge-digby writes when it has finished its configuration work.

The prolog script runs only on the master node for a job. For each host involved in the job, the cgroup_bind.sh script is called to make the desired cgroup configuration changes on that host (invoked directly on the master and via ssh to any slave hosts).

On hosts with an Intel Phi, the prolog script is also responsible for provisioning any mic# devices granted to the job. For each Phi across all hosts participating in the job, the boot_mic.sh script is run to boot the Phi and configure for the job's user.

The prolog script logs its progress to the job's stdout file.

Grid Engine Epilog

The epilog script calls sge-rsrc (in epilog mode) and for any host with Intel Phi coprocessors associated with the job, it calls the reset_mic.sh script to reboot the device and get it back to a "ready" state.

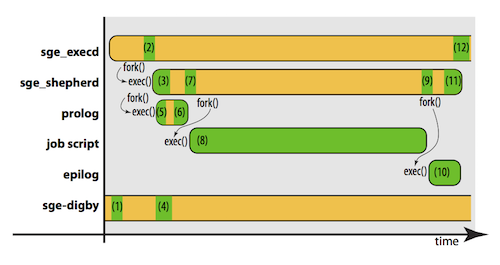

Process Overview

The following figure provides an overview of how all of the components described above fit together:

| Stage # | Discussion |

|---|---|

| 1 | The sge-digby daemon is notified of the exec() of sge_execd; configures /cgroup/<subsystem>/GE containers |

| 2 | The sge_execd is asked to execute a job; it forks and executes a shepherd for the job |

| 3 | The sge_shepherd for the job begins executing; the shepherd forks and executes the Grid Engine prolog script |

| 4 | At the same time as 3, sge-digby is notified of the exec() of sge_shepherd; configures /cgroup/<subsystem>/GE/<job-id>.<task-id> containers |

| 5 | The Grid Engine prolog script executes; the script sleeps waiting on the sge-digby sentinel file to appear |

| 6 | The sge_digby sentinel file appears, the Grid Engine prolog continues execution: cgroup_bind.sh and boot_mic.sh are executed, etc. |

| 7 | The Grid Engine prolog completes execution; the shepherd forks and executes the job script |

| 8 | The job script begins execution |

| 9 | The job script completes execution; the shepherd forks and executes the Grid Engine epilog script |

| 10 | The Grid Engine epilog script executes |

| 11 | The Grid Engine epilog completes execution; the shepherd returns accounting information to qmaster and completes execution |

| 12 | The sge_shepherd for the job completes execution |

For multi-node jobs the process differs only slightly. The cgroup configuration made on slave nodes by the Grid Engine prolog script (stage 6) is present but unused until a qrsh -inherit is used to startup remote processes on a slave node. When this occurs, sge_execd on the slave node forks and executes a new sge_shepherd, at which time sge-digby is notified of its execution and quarantines the process into the cgroup containers created by the Grid Engine prolog.