All About Lustre

The filesystems with which most users are familiar store data in discrete chunks called blocks on a single hard disk. Blocks have a fixed size (for example, 4 KiB) so a 1 MiB file will be split into 256 blocks (256 blocks x 4 KiB/block = 1024 KiB). Performance of this kind of filesystem depends on the speed at which blocks can be read from and written to the hard disk — (input/output, or i/o). Different kinds of disk yield differing i/o performance: a solid-state disk (SSD) will move blocks back and forth faster than a basic SATA hard disk used in a desktop computer.

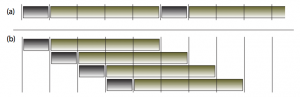

Buying a better (and more expensive) disk is one way to improve i/o performance, but once the fastest, most expensive disk has been purchased this path leaves no room for further improvement. The demands of an HPC cluster with several hundred (maybe even thousands) of compute nodes quickly outpaces the speed at which a single disk can shuttle bytes back and forth. Parallelism saves the day: store the filesystem blocks on more than one disk and the i/o performance of each will sum (to a degree). For example, consider a computer that can move data to its hard disks in 1 cycle with a hard disk that requires 4 cycles to write a block. Storing four blocks to just one hard disk would require 20 cycles: 1 cycle to move the block to the disk and 4 cycles to write it, with each block waiting on the completion of the previous:

With four disks being used in parallel (example (b) above), the block writing overlaps and takes just 8 cycles to complete.

Parallel use of multiple disks is the key behind many higher-performance disk technologies. RAID (Redundant Array of Independent Disks) level 6 uses three or more disks to improve i/o performance while retaining parity copies of data1). Should one or two of the constituent disks fail, the missing data can be reconstructed using the parity copies. It is RAID-6 that forms the basic building block of the Lustre filesystem on the Mills cluster.

A Storage Node

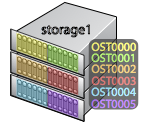

The Mills cluster contains five storage appliances that each contain many hard disks. For example, storage1 contains 36 SATA hard disks (2 TB each) arranged as six 8 TB RAID-6 units:

Each of the six OST (Object Storage Target) units can survive the concurrent failure of one or two hard disks at the expense of storage space: the raw capacity of storage1 is 72 TB, but the data resilience afforded by RAID-6 costs a full third of that capacity (leaving 48 TB).

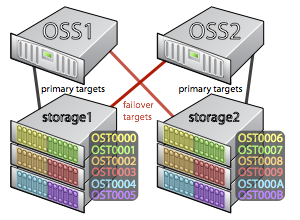

The storage appliances are limited in their capabilities: they only function to move blocks of data to and from the disks they contain. In an HPC cluster the storage is shared between many compute nodes. Nodes funnel their i/o requests to the shared storage system by way of the cluster's private network. A dedicated machine called an OSS (Object Storage Server) acts as the middleman between the cluster nodes and the OSTs:

In Mills, each OSS is primarily responsible for one storage appliance's OSTs. As illustrated above, OST0000 through OST0005 are serviced primarily by OSS1. If OSS1 were to fail compute nodes would no longer be able to interact with those OSTs. This situation is tempered by having each OSS act as a failover OSS for a secondary set of OSTs. If OSS1 fails, then OSS2 will take control of OST0000 through OST0005 in addition to its own OST0006 through OST000B. When OSS1 is repaired, it can retake control of its OSTs from its partner.

lfs check servers command.

The Lustre Filesystem

As illustrated thus far, each OST increases i/o performance by simultaneously moving data blocks to the six hard disks of a RAID-6 unit. Each OSS services six OSTs, accepting and interleaving six unique i/o workloads to further increase the speed with which data moves to and from the OSTs. Having multiple OSSs (and thus additional OSTs) adds yet another level of parallelism to the scheme: each OSS processes six unique i/o workloads. The agglomeration of multiple OSS nodes, each servicing one or more OST, is the basis of a Lustre filesystem2).

The benefits of a Lustre filesystem should be readily apparent from the discussion above:

- Parallelism is leveraged at multiple levels to increase i/o performance

- RAID-6 storage at the base level provides resilience

- File system capacity is not limited by hard disk size

The capacity of a Lustre filesystem is the sum of its constituent OSTs, so a Lustre filesystem's capacity can be grown by the addition of OSTs (and possibly OSSs to serve them). For example, should the 172 TB Lustre filesystem on Mills begin to approach its capacity, additional capacity could be added with zero downtime by buying and installing another OSS pair.

Further Performance Boost: Striping

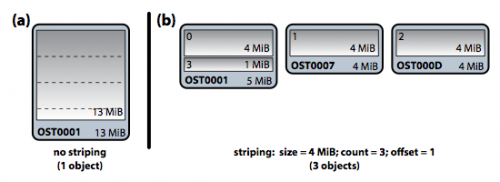

Normally on a Lustre filesystem each file resides in toto on a single OST. In Lustre terminology, a file maps to a single object, and an object is a variable-size chunk of data which resides on an OST. When a program works with a file, it must direct all of its i/o requests to a single OST (and thus a single OSS).

For large files or files that are internally organized as "records3)" i/o performance can be further improved by striping the file across multiple OSTs. Striping divides a file into a set of sequential, fixed-size chunks. The stripes are distributed round-robin to N unique Lustre objects – and thus on N unique OSTs. For example, consider a 13 MiB file:

Without striping, all 13 MiB of the file resides in a single object on OST0001 (see (a) above). All i/o with respect to this file is handled by OSS1; appending 5 MiB to the file will grow the object to 18 MiB.

With a stripe count of three and size of 4 MiB, the Lustre filesystem pre-allocates three objects on unique OSTs on behalf of the file (see (b) above). The file is split into sequential segments of 4 MiB – a stripe – and the stripes are written round-robin to the objects allocated to the file. In this case, appending 5 MiB to the file will see stripe 3 extended to a full 4 MiB and a new stripe of 2 MiB added to the object on OST0007. For large files and record-style files, striping introduces another level of parallelism that can dramatically increase the performance of programs that access them.

lfs setstripe command to pre-allocate the objects for a striped file: lfs setstripe -c 4 -s 8m my_new_file.nc would create the file my_new_file.nc containing zero bytes with a stripe size (-s) of 8 MiB and striped across four objects (-c).

lfs setstripe and copying the contents of the old file into it effectively changes the data's striping pattern.

Lustre utilities

There are two custom utilities, lrm and ldu, available for removing files and checking disk usage on Lustre. They both make use of specially-written code that rate-limits calls to the lstat(), unlink() and rmdir() C functions to minimize the stress on Lustre. Both of these utilities should be used on a compute node only (using qlogin).

lrm or ldu it's a good idea to first start a screen session and then login to a compute node using qlogin.

Delete or remove (lrm)

lrm is a custom utility available for removing files on Lustre. It reuses all but the --force flag of the native rm utility and reproduces its runtime behavior as closely as possible. An additional option is present to track the size of all the removed items and report that at the end of the process. lrm should be used on a compute node only.

[traine@mills ~]$ lrm

usage:

lrm {options} <path> {<path> ..}

options:

-h/--help This information

-V/--version Version information

-q/--quiet Minimal output, please

-v/--verbose Increase the level of output to stderr as the program

--interactive{=WHEN} Prompt the user for removal of items. Values for WHEN

are never, once (-I), or always (-i). If WHEN is not

specified, defaults to always

-i Shortcut for --interactive=always

-I Shortcut for --interactive=once; user is prompted one time

only if a directory is being removed recursively or if more

than three items are being removed

-r/--recursive Remove directories and their contents recursively

-s/--summary Display a summary of how much space was freed...

-k/--kilobytes ...in kilobytes

-H/--human-readable ...in a size-appropriate unit

-S/--stat-limit #.# Rate limit on calls to stat(); floating-point value in

units of calls / second

-U/--unlink-limit #.# Rate limit on calls to unlink() and rmdir(); floating-

point value in units of calls / second

-R/--rate-report Always show a final report of i/o rates

$Id: lrm.c 470 2013-08-22 17:40:01Z frey $

The example below shows user traine in workgroup it_nss on compute node n012 removing /lustre/work/it_nss/projects/namd directory and all files and subdirectories using the --recursive option. The additional option --summary is also used to display how much space was freed in bytes. Note traine was already in /lustre/work/it_nss/projects before using qlogin to login into the compute node n012.

[(it_nss:traine)@mills projects]$ qlogin

Your job 369292 ("QLOGIN") has been submitted

waiting for interactive job to be scheduled ...

Your interactive job 369292 has been successfully scheduled.

Establishing /opt/shared/OpenGridScheduler/local/qlogin_ssh session to host n012 ...

Last login: Thu Aug 22 14:32:16 2013 from mills.mills.hpc.udel.edu

[traine@n012 projects]$ pwd

/lustre/work/it_nss/projects

[traine@n012 projects]$ lrm --summary --recursive --stat-limit 100 --unlink-limit 100 ./namd

lrm: removed 354826645 bytes

--stat-limit and --unlink-limit) to 100 in order to remove files at a slow pace on Lustre.

Disk usage (ldu)

ldu is a custom utility available for summarizing disk usage on Lustre. It reproduces its runtime behavior as closely as possible with the native du based on the options available below. ldu should be used on a compute node only.

[traine@mills ~]$ ldu

usage:

ldu {options} <path> {<path> ..}

options:

-h/--help This information

-V/--version Version information

-q/--quiet Minimal output, please

-v/--verbose Increase the level of output to stderr as the program

-k/--kilobytes Display usage sums in kilobytes

-H/--human-readable Display usage sums in a size-appropriate unit

-S/--stat-limit #.# Rate limit on calls to stat(); floating-point value in

units of calls / second

-R/--rate-report Always show a final report of i/o rates

$Id: ldu.c 470 2013-08-22 17:40:01Z frey $

The example below shows user traine in workgroup it_nss on compute node n012 summarizing their disk usage on /lustre/work/it_nss/projects directory. Note traine was already in /lustre/work/it_nss/projects before using qlogin to login into compute node n012.

[(it_nss:traine)@mills projects]$ qlogin

Your job 369292 ("QLOGIN") has been submitted

waiting for interactive job to be scheduled ...

Your interactive job 369292 has been successfully scheduled.

Establishing /opt/shared/OpenGridScheduler/local/qlogin_ssh session to host n012 ...

Last login: Thu Aug 22 14:32:16 2013 from mills.mills.hpc.udel.edu

[traine@n012 projects]$ pwd

/lustre/work/it_nss/projects

[traine@n012 projects]$ ldu --human-readable --stat-limit 100 ./

[2013-08-22 13:48:00-0400] leon_stat: 25765 calls over 103.396 seconds (249 calls/sec)

[2013-08-22 13:48:37-0400] leon_stat: 25793 calls over 141.063 seconds (183 calls/sec)

[2013-08-22 13:49:19-0400] leon_stat: 25838 calls over 183.257 seconds (141 calls/sec)

[2013-08-22 13:50:43-0400] leon_stat: 26778 calls over 266.790 seconds (100 calls/sec)

821.07 GiB /lustre/work/it_nss/projects

--stat-limit) to 100 in order to summarize the disk usage on Lustre at a slow pace.

You may have noticed that the first rate shown above is NOT 100 as requested. It takes 1 second for the rate-limiting logic to gather initial lstat(), rmdir() and unlink() profiles. After that, instantaneously meeting the desired rate would have the utility calling lstat() in bursts with long periods of inactivity between those bursts. This is not the desired behavior. Instead, the utility uses much shorter periods of inactivity to eventually meet the requested rate.

lrm or ldu cases, the USR1 signal will cause the utility to display the current i/o rate(s) as seen in the above example. The USR1 signal can be delivered to the utility by finding its process id (using ps) and then issuing the kill -USR1 <pid> command on the compute node on which lrm or ldu is running:[traine@n012 ~]$ ps -u traine PID TTY TIME CMD 7059 ? 00:00:00 sshd 7060 pts/12 00:00:00 bash 8834 pts/12 00:00:00 ps 6000 ? 00:00:00 sshd 6001 pts/12 00:00:00 bash 6008 pts/12 00:00:00 ldu [traine@n012 ~]$ kill -USR1 6008