Getting started on DARWIN

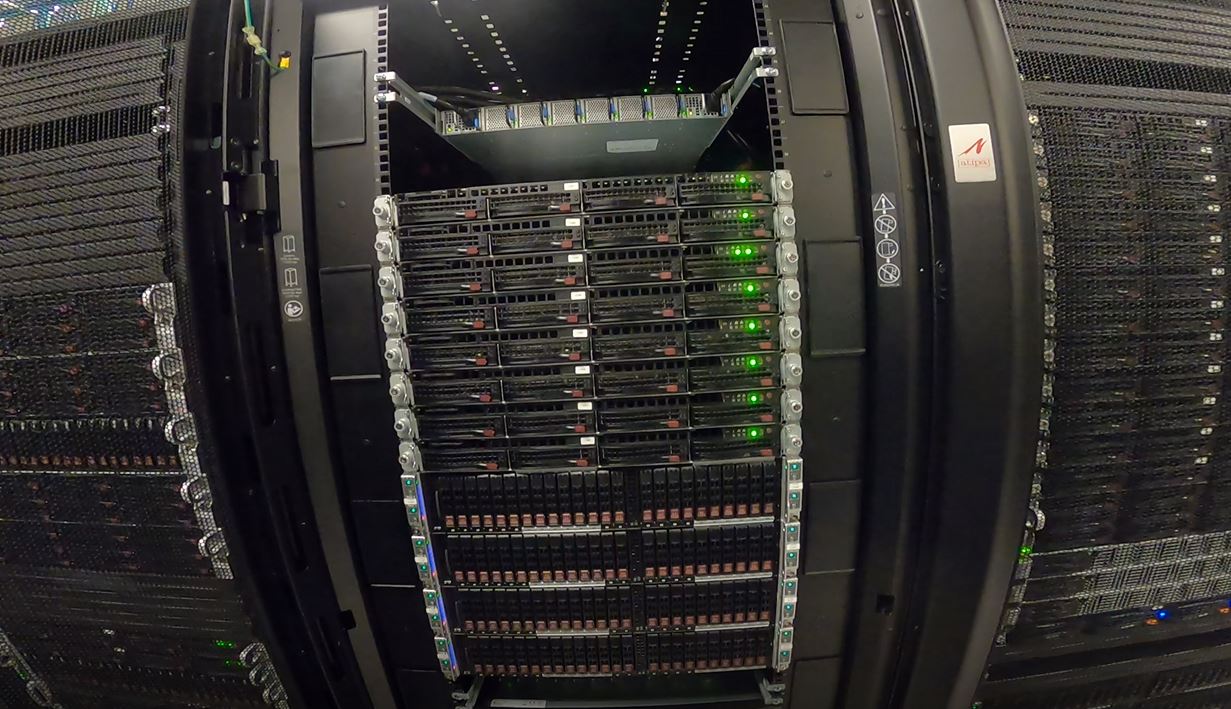

DARWIN (Delaware Advanced Research Workforce and Innovation Network) is a big data and high performance computing system designed to catalyze Delaware research and education funded by a $1.4 million grant from the National Science Foundation (NSF). This award will establish the DARWIN computing system as an XSEDE Level 2 Service Provider in Delaware contributing 20% of DARWIN's resources to XSEDE: Extreme Science and Engineering Discovery Environment now transitioned to ACCESS: Advanced Cyberinfrastructure Coordination Ecosystem: Services & Support as of September 1, 2022. DARWIN has 105 compute nodes with a total of 6672 cores, 22 GPUs, 100TB of memory, and 1.2PB of disk storage. See compute nodes and storage for complete details on architecture.

Configuration

Overview

The DARWIN cluster is being set up to be very similar to the existing Caviness cluster, and will be familiar to those currently using Caviness. However DARWIN is a NSF funded HPC resource available via committee reviewed allocation request process similar to ACCESS (XSEDE) allocations.

A HPC cluster always has one or more public-facing systems known as login nodes. The login nodes are supplemented by many compute nodes which are connected by a private network. One or more head nodes run programs that manage and facilitate the functioning of the cluster. (In some clusters, the head node functionality is present on the login nodes.) Each compute node typically has several multi-core processors that share memory. Finally, all the nodes share one or more filesystems over a high-speed network.

Login nodes

Login (head) nodes are the gateway into the cluster and are shared by all cluster users. Their computing environment is a full standard variant of Linux configured for scientific applications. This includes command documentation (man pages), scripting tools, compiler suites, debugging/profiling tools, and application software. In addition, the login nodes have several tools to help you move files between the HPC filesystems and your local machine, other clusters, and web-based services.

If your work requires highly interactive graphics and animations, these are best done on your local workstation rather than on the cluster. Use the cluster to generate files containing the graphics information, and download them from the HPC system to your local system for visualization.

When you use SSH to connect to darwin.hpc.udel.edu your computer will choose one of the login (head) nodes at random. The default command line prompt clearly indicates to which login node you have connected: for example, [traine@login00.darwin ~]$ is shown for account traine when connected to login node login00.darwin.hpc.udel.edu.

screen or tmux utility to preserve your session after logout.

Resource limits are of critical importance on cluster login nodes. Without effective limits in place, a single user could monopolize a login node and leave the cluster inaccessible to others. Every user is restricted to all but the first 16 CPU cores and 8 GiB of memory on the DARWIN cluster login nodes where the CPU time is divided equally to every process.

Compute nodes

There are many compute nodes with different configurations. Each node consists of multi-core processors (CPUs), memory, and local disk space. Nodes can have different OS versions or OS configurations, but this document assumes all the compute nodes have the same OS and almost the same configuration. Some nodes may have more cores, more memory, GPUs, or more disk.

All compute nodes are now available and configured for use. Each compute node has 64 cores, so the compute resources available are the following:

| Compute Node | Number of Nodes | Node Names | Total Cores | Memory per Node | Total Memory | Total GPUs |

|---|---|---|---|---|---|---|

| Standard | 48 | r1n00 - r1n47 | 3,072 | 512 GiB | 24 TiB | |

| Large Memory | 32 | r2l00 - r2l31 | 2,048 | 1024 GiB | 32 TiB | |

| Extra-Large Memory | 11 | r2x00 - r2x10 | 704 | 2,048 GiB | 22 TiB | |

| nVidia-T4 (16GB) | 9 | r1t00 - r1t07, r2t08 | 576 | 512 GiB | 4.5 TiB | 9 |

| nVidia-V100 (32GB) | 3 | r2v00 - 02 | 144 | 768 GiB | 2.25 TiB | 12 |

| AMD-MI50 | 1 | r2m00 | 64 | 512 GiB | .5 TiB | 1 |

| Extended Memory | 1 | r2e00 | 64 | 1024 GiB + 2.73 TiB1) | 3.73 TiB | |

| Total | 105 | 6,672 | 88.98 TiB | 22 |

The standard Linux on the compute nodes are configured to support just the running of your jobs, particularly parallel jobs. For example, there are no man pages on the compute nodes. All compute nodes will have full development headers and libraries.

Commercial applications, and normally your programs, will use a layer of abstraction called a programming model. Consult the cluster specific documentation for advanced techniques to take advantage of the low level architecture.

Storage

Home filesystem

Each DARWIN user receives a home directory (/home/<uid>) that will remain the same during and after the early access period. This storage has slower access with a limit of 20 GiB. It should be used for personal software installs and shell configuration files.

Lustre high-performance filesystem

Lustre is designed to use parallel I/O techniques to reduce file-access time. The Lustre filesystems in use at UD are composed of many physical disks using RAID technologies to give resilience, data integrity, and parallelism at multiple levels. There is approximately 1.1 PiB of Lustre storage available on DARWIN. It uses high-bandwidth interconnects such as Mellanox HDR100. Lustre should be used for storing input files, supporting data files, work files, and output files associated with computational tasks run on the cluster.

- Each allocation will be assigned a workgroup storage in the Lustre directory (

/lustre/«workgroup»/). - Each workgroup storage will have a users directory (

/lustre/«workgroup»/users/«uid») for each user of the workgroup to be used as a personal directory for running jobs and storing larger amounts of data. - Each workgroup storage will have a software and VALET directory (

/lustre/«workgroup»/sw/and/lustre/«workgroup»/sw/valet) all allow users of the workgroup to install software and create VALET package files that need to be shared by others in the workgroup. - There will be a quota limit set based on the amount of storage approved for your allocation for the workgroup storage.

Local filesystems

Each node has an internal, locally connected disk. Its capacity is measured in terabytes. Each compute node on DARWIN has a 1.75 TiB SSD local scratch filesystem disk. Part of the local disk is used for system tasks such memory management, which might include cache memory and virtual memory. This remainder of the disk is ideal for applications that need a moderate amount of scratch storage for the duration of a job's run. That portion is referred to as the node scratch filesystem.

Each node scratch filesystem disk is only accessible by the node in which it is physically installed. The job scheduling system creates a temporary directory associated with each running job on this filesystem. When your job terminates, the job scheduler automatically erases that directory and its contents.

More detailed information about DARWIN storage and quotas can be found on the sidebar under Storage.

Software

A list of installed software that IT builds and maintains for DARWIN users can be found by logging into DARWIN and using the VALET command vpkg_list.

Documentation for all software is organized in alphabetical order on the sidebar under Software. There will likely not be a details by cluster for DARWIN, however referring to Caviness should still be applicable for now.

There will not be a full set of software during early access and testing, but we will be continually installing and updating software. Installation priority will go to compilers, system libraries, and highly utilized software packages. Please DO let us know if there are packages that you would like to use on DARWIN, as that will help us prioritize user needs, but understand that we may not be able to install software requests in a timely manner.

Please review the following documents if you are planning to compile and install your own software

- High Performance Computing (HPC) Tuning Guide for AMD EPYC™ 7002 Series Processors guide for getting started tuning AMD 2nd Gen EPYC™ Processor based systems for HPC workloads. This is not an all-inclusive guide and some items may have similar, but different, names in specific OEM systems (e.g. OEM-specific BIOS settings). Every HPC workload varies in its performance characteristics. While this guide is a good starting point, you are encouraged to perform your own performance testing for additional tuning. This guide also provides suggestions on which items should be the focus of additional, application-specific tuning (November 2020).

- HPC Tuning Guide for AMD EPYC™ Processors guide intended for vendors, system integrators, resellers, system managers and developers who are interested in EPYC system configuration details. There is also a discussion on the AMD EPYC software development environment, and we include four appendices on how to install and run the HPL, HPCG, DGEMM, and STREAM benchmarks. The results produced are ‘good’ but are not necessarily exhaustively tested across a variety of compilers with their optimization flags (December 2018).

- AMD EPYC™ 7xx2-series Processors Compiler Options Quick Reference Guide, however we do not have the AOCC compiler (with Flang - Fortran Front-End) installed on DARWIN.

Scheduler

DARWIN uses the Slurm scheduler like Caviness, and is the most common scheduler among ACCESS (XSEDE) resources. Slurm on DARWIN is configured as fair share with each user being given equal shares to access the current HPC resources available on DARWIN.

Queues (Partitions)

Partitions have been created to align with allocation requests moving forward based on different node types. There will be no default partition, and must only specify one partition at a time. It is not possible to specify multiple partitions using Slurm to span different node types.

See Queues on the sidebar for detailed information about the available partitions on DARWIN.

Run Jobs

In order to schedule any job (interactively or batch) on the DARWIN cluster, you must set your workgroup to define your cluster group. Each research group has been assigned a unique workgroup. Each research group should have received this information in a welcome email. For example,

workgroup -g it_css

will enter the workgroup for it_css. You will know if you are in your workgroup based on the change in your bash prompt. See the following example for user traine

[traine@login00.darwin ~]$ workgroup -g it_css [(it_css:traine)@login00.darwin ~]$ printenv USER HOME WORKDIR WORKGROUP WORKDIR_USER traine /home/1201 /lustre/it_css it_css /lustre/it_css/users/1201 [(it_css:traine)@login00.darwin ~]$

Now we can use salloc or sbatch as long as a partition is specified as well to submit an interactive or batch job respectively. See DARWIN Run Jobs, Schedule Jobs and Managing Jobs wiki pages for more help about Slurm including how to specify resources and check on the status of your jobs.

/opt/shared/templates/slurm/ for updated or new templates to use as job scripts to run generic or specific applications designed to provide the best performance on DARWIN.

See Run jobs on the sidebar for detailed information about the running jobs on DARWIN and specifically Schedule job options for memory, time, gpus, etc.

Help

System or account problems

UD allocations

To report a problem or provide feedback, please submit a Research Computing High Performance Computing (HPC) Clusters Help Request and complete the form including DARWIN in the short description, problem type High Performance Computing, and your problem details in the description.

ACCESS (XSEDE) allocations

Passwords are not set for ACCESS accounts on DARWIN. If this is a new account, you must set a password using the password reset web application at https://idp.hpc.udel.edu/access-password-reset/. See Logging on to DARWIN for ACCESS (XSEDE) Allocation Users for complete details.

To report a problem or provide feedback, submit a help desk ticket on the ACCESS Portal and complete the form selecting DARWIN as the resource and your problem details in the problem synopsis, problem description and tag fields to route your question more quickly to the research support team. Provide enough details (including full paths of batch script files, log files, or important input/output files) that our consultants can begin to work on your problem without having to ask you basic initial questions.

Ask or tell the HPC community

hpc-ask is a Google group established to stimulate interactions within UD’s broader HPC community and is based on members helping members. This is a great venue to post a question about HPC, start a discussion, or share an upcoming event with the community. Anyone may request membership. Messages are sent as a daily summary to all group members. This list is archived, public, and searchable by anyone.

Publication and Grant Writing Resources

Please refer to the NSF award information for a proposal or requesting allocations on DARWIN. We require all allocation recipients to acknowledge their allocation awards using the following standard text: “This research was supported in part through the use of DARWIN computing system: DARWIN – A Resource for Computational and Data-intensive Research at the University of Delaware and in the Delaware Region, Rudolf Eigenmann, Benjamin E. Bagozzi, Arthi Jayaraman, William Totten, and Cathy H. Wu, University of Delaware, 2021, URL: https://udspace.udel.edu/handle/19716/29071″

If you need to have SU to dollar conversions based on your DARWIN allocation for grant proposals or reports, please submit a Research Computing High Performance Computing (HPC) Clusters Help Request and complete the form including DARWIN and indicated you are requesting SU to dollar conversions in the details in the description field.